A new research report has revealed something many SEO professionals have suspected but could never quantify clearly: large language models (LLMs) and Google Search do not cite the web in the same way.

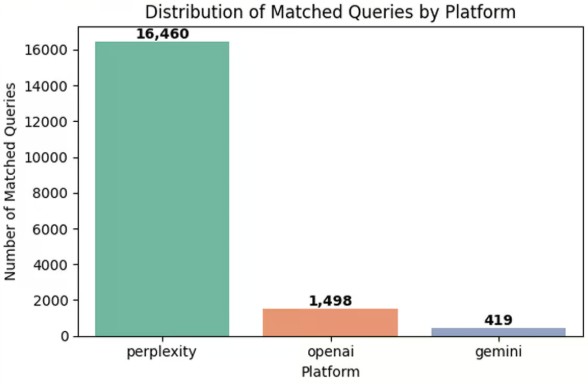

The study compared a massive dataset of 18,377 matched queries analyzed by Search Atlas reveals a growing disconnect between traditional SEO visibility and AI citation visibility.

So what exactly did the study uncover? And what does it mean for brands trying to stay visible in the AI-first era?

Why Did Search Atlas Compare Google SERPs with AI Citations?

The purpose of the research was to understand whether the web domains that appear on Google Search Engine Results Pages (SERPs) also appear as cited sources in responses generated by large language models.

As AI has increasingly become a primary information gateway, the question is not optional anymore. It is necessary.

The study set out to measure overlap between:

- Google SERP URLs and domains

- LLM-generated citations from ChatGPT (GPT), Perplexity and Gemini

To avoid superficial similarities, the researchers used text embeddings and cosine similarity to pair LLM queries with SERP keywords only when the two expressed 82% or higher semantic similarity.

This ensured that comparisons were made between queries with almost identical intent.

Once matched, each pair was evaluated for domain-level and URL-level overlap. This produced a detailed map of how closely each LLM tracks the current web.

How Was the Study Designed?

Let’s see exactly how the research team approached this.

The SERP dataset was collected across September and October 2025, capturing keyword-level search results. Each record included URLs and domains.

Meanwhile, the LLM dataset, gathered in October 2025 contained GPT, Gemini and Perplexity responses with complete citation lists.

Every SERP entry was parsed from JSON, normalized and cleaned. Domains were standardized using tldextract, while URLs were converted into consistent lowercase formats with unnecessary parameters removed.

For the LLM side, responses were grouped by query response ID. Each response preserved its platform, query title, timestamps and complete cited source list.

The key step was embedding which means both SERP keywords and LLM query titles were converted into vectors using OpenAI’s text-embedding-3-small model.

Each LLM query was matched with its closest SERP keyword using cosine similarity computed on GPU. Only pairs exceeding the 0.82 similarity threshold were retained.

This filtering resulted in 18,377 high-confidence LLM–SERP matched pairs, which then formed the basis for overlap measurement

Domain overlap measured shared domains referenced by both Google and the LLM for the same query. URL overlap measures exact page-level matches, more stringent and much harder to achieve. These overlap percentages were then aggregated across platforms and query intents

What Did the Study Find About Perplexity’s Alignment With Google?

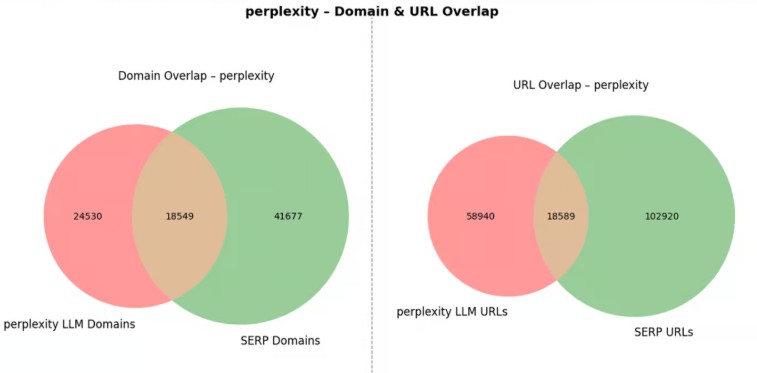

Among all platforms evaluated, Perplexity showed the strongest alignment with Google. This is not surprising, considering Perplexity performs live web retrieval.

Across the dataset:

- Perplexity’s median domain overlap ranged between 25% and 30%, meaning a significant portion of the domains it cites also appear in Google’s search results.

- URL overlap was also noticeably higher than the others, hovering near 20%.

- In total numbers, Perplexity shared 18,549 domains with Google, about 43% of all domains it cited.

This suggests Perplexity functions almost like a search engine in terms of source selection.

The model actively retrieves new, trending and authoritative sources, reflecting real-time SERP patterns. For businesses that already enjoy high Google rankings, Perplexity becomes an additional channel where visibility is likely to follow.

Why Do ChatGPT’s Citations Differ So Much From Google?

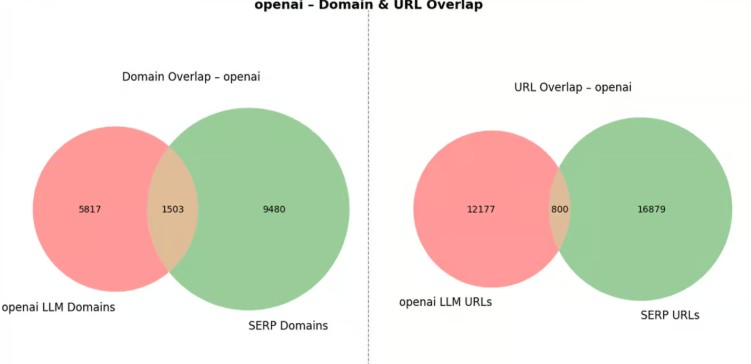

ChatGPT, tested without WebSearch enabled, demonstrated far lower alignment with Google. The results showed:

- Median domain overlap: 10–15%

- Total shared domains: 1,503

- That’s 21% of ChatGPT’s cited domains

- URL overlap: typically under 10%

The takeaway? ChatGPT behaves like a reasoning engine, not a retrieval engine. It relies on:

- Pre-trained knowledge

- Distilled information

- Internal representations

- Summarization over citation

Which means even if your site ranks #1 today, ChatGPT may still reference an entirely different domain if it believes the information is conceptually “known” from training.

This is a fundamental shift: AI visibility is not the same as AI SEO visibility.

Why Is Gemini’s Behavior So Inconsistent?

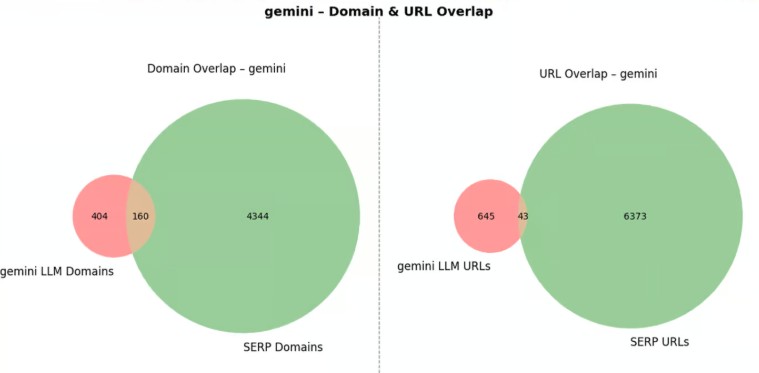

Gemini’s results were the most unpredictable of the three systems.

Although Gemini shared 28% of its cited domains with Google, this number is misleading.

In absolute terms, Gemini matched only 160 domains with SERP results, just 4% of all domains appearing in Google’s dataset.

Gemini’s overlap varied dramatically across queries. Some responses had nearly zero overlap with SERP sources.

Others matched closely. Unlike Perplexity, Gemini appears to use a selective and filtered retrieval strategy, pulling from a narrower or more curated pool of sources.

This is particularly notable because Gemini is a Google product, yet its citation patterns differ significantly from Google Search.

Does Ranking Well on Google Help You Get Cited by AI Models?

Here comes the big question the SEO world cares about. The truth based on the data:

- Perplexity: Yes, ranking helps. Its retrieval model means SERP-visible domains are more likely to be cited.

- ChatGPT: Not necessarily. Even high-ranking sites may not appear.

- Gemini: Very little correlation. Google search dominance doesn’t guarantee Gemini citations.

So the larger point is: SEO and LLM visibility are NOT the same game anymore. Brands need to optimize for both fields independently.

How Should We Interpret Domain vs URL Overlap?

Domain-level overlap reflects topical consistency both Google and the LLM recognize that certain websites are authoritative on a subject.

URL-level overlap is stricter and reflects retrieval precision. Only Perplexity demonstrated strong URL-level matching, even achieving near-perfect alignment for some queries.

ChatGPT and Gemini routinely addressed the same topics while citing different pages or entirely different websites.

This shows that AI models rarely replicate search engine behavior at the exact page level. They operate from broader conceptual knowledge rather than strict URL retrieval.

What Limitations Should Readers Consider?

The study acknowledges several constraints:

- The analysis was limited to a two-month window (Sept–Oct 2025).

- Query intent distribution may not be perfectly uniform.

- Semantic similarity (0.82) captures closeness, not identical intent.

Architectural differences between retrieval and non-retrieval models inherently affect outcomes.

Despite these limitations, the study’s scale and methodology provide one of the clearest insights to date on the divergence between search engines and AI systems.

What Does This Mean for the Future of SEO and AI Discovery?

If one thing is certain, it’s that AI is reshaping how information is discovered. The study shows that traditional SEO visibility is no longer the sole determinant of online presence.

A brand can rank highly on Google yet fail to appear in AI-generated answers. Conversely, retrieval-focused platforms like Perplexity may carry that visibility over.

This signals a future where:

- SEO strategies must adapt to LLM behaviors

- AI citation optimization becomes a new discipline

- Businesses must balance search visibility with AI visibility

- Retrieval-augmented systems play a growing role in information access

For now, the strongest alignment lies with Perplexity.

TL, DR

- Google ranking ≠ LLM citation.

- Perplexity aligns closest with SERPs due to live web retrieval.

- ChatGPT cites from training, not rankings → low overlap.

- Gemini is selective and inconsistent despite being Google’s own model.

- Domain overlap is moderate, URL overlap is very low across all models.

- AI visibility is now separate from SEO visibility.

Dipti Arora

AuthorDipti Arora is a Senior Content Writer with over seven years of experience creating impactful content across Digital Marketing, SEO, technology, and business domains. She has a strong background in managing news verticals and delivering editorial excellence. Dipti has contributed to leading publications such as The Times of India and CEO News, where her research-driven storytelling and ability to simplify complex subjects have consistently stood out. She is passionate about crafting content that informs, engages, and drives meaningful results.