Google’s latest Gemini 2.5 Computer Use model brings artificial intelligence closer to human-like interaction with computers, giving developers a tool that can click, type, scroll, and manage digital tasks across web and mobile interfaces.

On October 7, 2025, Google announced the release of its Gemini 2.5 Computer Use model, a specialized system that allows AI agents to operate computers the way people do.

Available in preview via the Gemini API, the model is built on Gemini 2.5 Pro’s advanced visual reasoning and comprehension capabilities.

Developers can now build agents that perform real actions on screens, such as filling forms, managing dropdowns, scrolling pages, and even handling login sessions.

In effect, it blurs the line between user and assistant, with the model capable of interacting with applications just like a human operator.

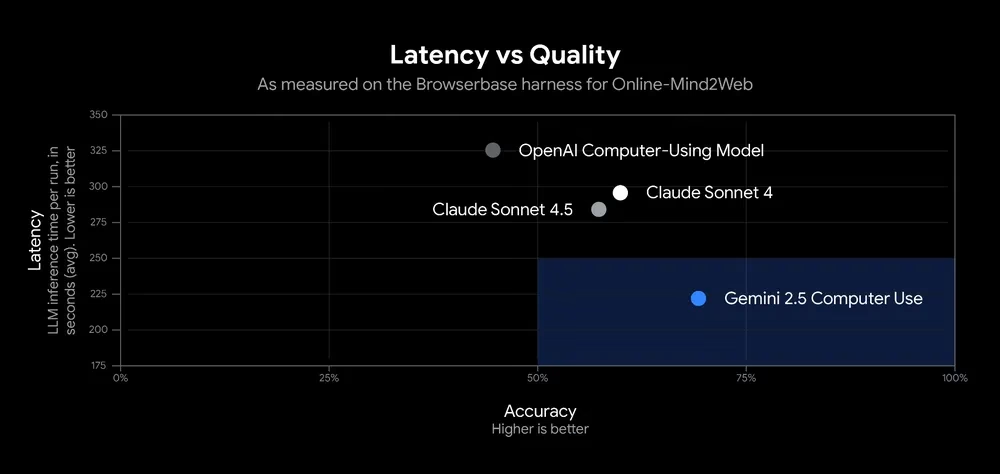

Google says the model outperforms existing options on multiple benchmarks while delivering lower latency, all accessible through Google AI Studio and Vertex AI.

How the Model Works

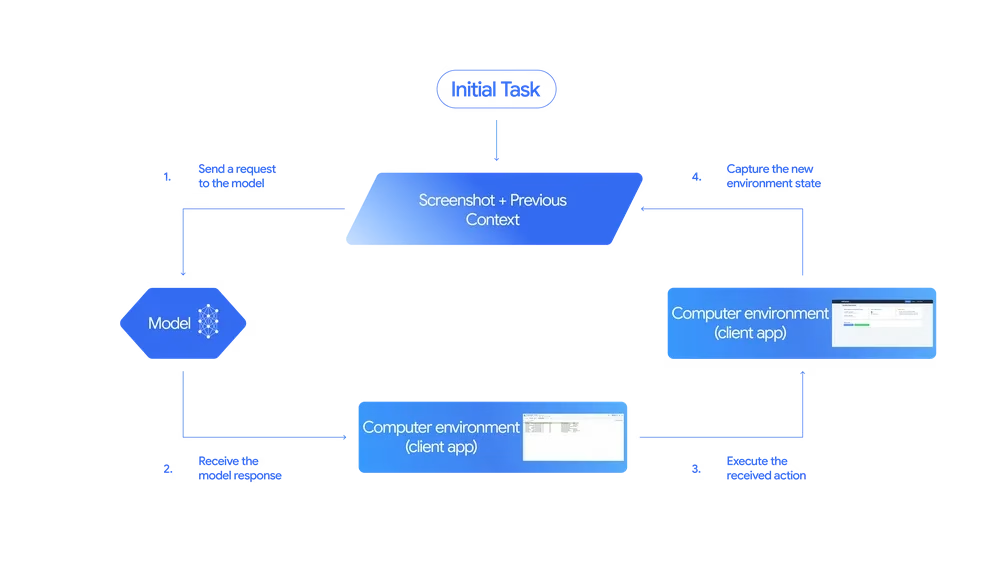

Gemini 2.5’s Computer Use system operates through a structured loop inside the Gemini API.

The model receives the user’s request, a screenshot of the environment, and the recent history of actions. It then decides the next step, whether that’s clicking a button, entering text, or requesting confirmation for sensitive actions like payments.

Once a step is completed, a new screenshot and the updated state are fed back into the model. This cycle continues until the task is completed or an error interrupts the loop.

Although currently optimized for browsers, the model also yields strong results in mobile environments, with early indications that broader platform control may be on the horizon.

Real-World Demonstrations

In early demonstrations, Gemini 2.5 showcased tasks that most current AI assistants can’t yet handle.

One test asked the model to pull data from a pet-care signup form and add it to a spa’s CRM, then schedule a follow-up appointment.

In another, the model reorganized sticky notes on a chaotic digital whiteboard, completing the task in seconds.

Performance Benchmarks

According to Google and independent evaluators like Browserbase, Gemini 2.5 Computer Use leads on key performance measures, especially Online-Mind2Web. It delivers high accuracy with significantly reduced latency compared to rival models.

Our new Gemini 2.5 Computer Use model is now available in the Gemini API, setting a new standard on multiple benchmarks with lower latency. These are early days, but the model’s ability to interact with the web – like scrolling, filling forms + navigating dropdowns – is an… pic.twitter.com/4PJoat9bwI

— Sundar Pichai (@sundarpichai) October 7, 2025

Building with Safety in Mind

With power comes risk, and Google is explicit about the need for responsibility. Computer-controlling AI introduces new challenges such as potential misuse, unpredictable actions, and exposure to malicious web content.

To counter this, Google integrated several layers of protection.

The model’s built-in safety features are reinforced by per-step evaluations that check every proposed action before it’s executed.

Developers can further customize restrictions or require user confirmation for high-stakes operations.

Google’s documentation also outlines best practices for responsible development and testing. The company urges developers to stress-test their implementations before deployment, emphasizing shared responsibility for safety.

Early Access Insights

Internal Google teams have already been using the Gemini 2.5 Computer Use model for UI testing. By allowing AI to interact with user interfaces autonomously, testing times have dropped dramatically.

Google’s payments platform team reported that the model could identify and fix UI test failures automatically, recovering over 60% of executions that previously required manual fixes.

External testers are equally enthusiastic.

- Poke.com, an AI assistant integrated with iMessage and WhatsApp, called Gemini 2.5 “50% faster and more accurate” than competing systems.

- Autotab, a data automation platform, said the model improved reliability in complex parsing tasks by 18%.

Such feedback suggests that Gemini 2.5 could become the foundation for next-generation autonomous agents across industries, from customer support to internal workflow management.

Getting Started

Developers eager to experiment can access the model in public preview through Google AI Studio or Vertex AI. Google encourages testing through Browserbase’s hosted demo or building custom loops locally using tools like Playwright.

Documentation and reference guides are available for developers interested in integrating the model into their own agents. Google is also inviting community feedback through its Developer Forum to shape future improvements.

Why It Matters

The Gemini 2.5 Computer Use model shows how far artificial intelligence has come in learning to work alongside people. It’s moving beyond simply giving answers or crunching data, now able to perform on-screen actions with awareness and purpose.

Developers and companies are beginning to see how this changes everyday work. Routine jobs can now be handled by an AI that knows its way around a screen, much like we do.

Early signs suggest this could be the start of a more natural partnership between humans and machines, where computers take care of the repetitive parts and people focus on ideas and problem-solving.

Insights for Developers

Here are ways developers can make the most of the Gemini 2.5 Computer Use model:

- Start small. Test simple, low-risk actions first to understand the model’s behavior before scaling.

- Leverage safety controls. Use confirmation prompts and restricted actions for critical workflows.

- Monitor performance metrics. Latency, accuracy, and task completion rate can help tune results.

- Simulate real environments. Testing in live web conditions ensures agents adapt to dynamic content.

- Contribute to the community. Sharing insights in Google’s forums can accelerate model maturity.

Key Takeaways

- Gemini 2.5 Computer Use allows AI agents to control UIs through clicks, typing, and scrolling.

- The model surpasses competitors on multiple benchmarks with faster performance.

- Built-in safety systems and developer controls reduce risks of unintended actions.

- Early adopters report major efficiency gains in automation and testing.

- Developers can experiment via Google AI Studio, Vertex AI, or Browserbase demos.

Zulekha

AuthorZulekha is an emerging leader in the content marketing industry from India. She began her career in 2019 as a freelancer and, with over five years of experience, has made a significant impact in content writing. Recognized for her innovative approaches, deep knowledge of SEO, and exceptional storytelling skills, she continues to set new standards in the field. Her keen interest in news and current events, which started during an internship with The New Indian Express, further enriches her content. As an author and continuous learner, she has transformed numerous websites and digital marketing companies with customized content writing and marketing strategies.