A recent court deposition from a senior Google engineer reveals how Google ranks websites in search results.

Released by the U.S. Justice Department as part of an antitrust case, the testimony outlines key ranking factors, including link signals, user behavior, a consistent quality score, and a previously unconfirmed popularity signal tied to Chrome browser data.

Though many specifics remain redacted, this rare firsthand account provides a clearer picture of the systems behind the search engine billions use every day.

Google’s ABCs: The Core of Ranking Relevance

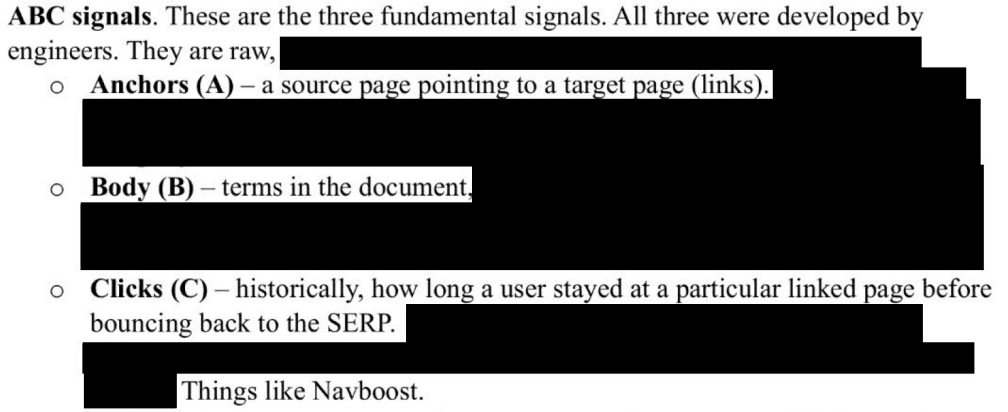

The engineer outlines three main signals that shape how Google judges relevance:

- Anchors: Links from other pages pointing to a given site

- Body: The actual text content on the page

- Clicks: User behavior (how long someone stays on a page before heading back to the search results)

These are known internally as ABC signals, and they feed into a system that evaluates topicality.

These signals aren’t left to machine learning alone. Google’s engineers manually shape the formulas that decide what gets ranked. They analyze user behavior, rater feedback, and performance data to hand-build rules and weights for each signal.

Why hand-build? Because when something breaks, they want to know exactly why and how to fix it. Unlike Microsoft’s Bing, which leans heavily on automated tuning, Google’s team keeps a tight grip on how each component works.

Quality Scores Don’t Fluctuate, They Stick

Google uses a static quality score to assess how trustworthy and useful a page is. This score doesn’t change with each search. If Google decides your page is high quality, it stays high across multiple topics.

In Google’s system, this is referred to as Q*.

A site with strong, well-researched content on a subject is considered reliable across many related queries. That’s why you see the same sources again and again, even when they’re not an exact match for what you typed.

The engineer notes that “page quality is something people complain about the most.” And the rise of AI-generated content has only made that worse. Even as Google builds its own AI tools, it’s fighting off floods of low-effort content polluting the search results.

AI Is Helping Google and Causing Problems

To make sense of complex language and intent, Google relies on systems like eDeepRank, which is built on BERT. This tool helps break down why a page might be considered a good result, especially when queries get tricky.

But AI cuts both ways. The engineer admits that AI-generated content has lowered search result quality, and that makes it harder for Google to uphold standards.

Even as AI helps Google sort and understand content, it’s also feeding the problem by enabling mass production of low-value pages.

PageRank Still Matters

Google’s original invention, PageRank, still plays a role. But it now works alongside link distance algorithms. These systems measure how far a page is from trusted “seed” sites in a given field. The closer a page is to those trusted sources (through backlinks), the more likely it is to rank well.

Think of it like degrees of separation. The more credible your connections, the more credible your site looks to Google.

This score then feeds back into the static quality score.

Chrome Browser Data Is Being Used in Rankings

One of the most explosive revelations in the testimony is confirmation of a popularity signal that uses Chrome browser data. The actual name of the signal is redacted, but the engineer clearly states that Chrome usage feeds into search rankings.

This gives weight to an age-old belief that user activity inside Chrome, such as what they click, how long they stay, and where they go next, could affect what Google considers popular or trustworthy.

While the engineer doesn’t reveal exactly how Chrome data is processed, this is the first time someone from inside Google has confirmed its existence in a legal setting.

Why This Matters

This testimony doesn’t unlock every secret, but it confirms enough to shift how content creators, marketers, and developers should think about search.

It’s not all about keywords anymore. Google is weighing reputation, real-world behavior, link credibility, and user satisfaction, all fine-tuned by human engineers who are actively managing the system.

If your content isn’t earning trust, holding attention, or gaining recognition from respected sources, it’s not just missing a trend. It’s being left behind by a system that sees far more than a few optimized headlines.

What You Should Do Now

Here’s what this means in practice:

- Build long-term trust: Your quality score stays with you. Invest in well-researched, helpful content.

- Stay relevant and specific: Google’s topicality score values clear matches between content and search intent.

- Keep users engaged: Google is watching how people interact with your site. Fast, readable, useful pages matter.

- Strengthen your backlink profile: Connections to credible, topic-related sites improve your authority.

- Use AI carefully: Tools can help, but overusing them can dilute quality and trigger ranking drops.

Key Takeaways

- Google ranks pages using link signals, content relevance, and user behavior.

- Page quality scores are stable and strongly impact visibility.

- Engineers manually refine ranking signals for accuracy and control.

- AI systems support ranking, but also contribute to quality issues.

- Chrome browser data is confirmed to influence search rankings.

Dileep Thekkethil

AuthorDileep Thekkethil is the Director of Marketing at Stan Ventures, where he applies over 15 years of SEO and digital marketing expertise to drive growth and authority. A former journalist with six years of experience, he combines strategic storytelling with technical know-how to help brands navigate the shift toward AI-driven search and generative engines. Dileep is a strong advocate for Google’s EEAT standards, regularly sharing real-world use cases and scenarios to demystify complex marketing trends. He is an avid gardener of tropical fruits, a motor enthusiast, and a dedicated caretaker of his pair of cockatiels.