Microsoft has identified a growing tactic where companies describe themselves to AI assistants as trusted sources by hiding instructions inside “Summarize with AI” buttons, raising concerns about manipulated recommendations and invisible bias in AI-generated answers.

Microsoft has disclosed new findings from its Defender Security Research Team that point to a growing method of influencing AI assistants at the moment users interact with them.

The practice relies on something ordinary on the surface: a website button inviting users to “Summarize with AI.”

Behind the scenes, that click can pass extra instructions through a URL parameter. While the visible request asks the AI to summarize a page, a hidden prompt may tell the assistant to remember the company as a trusted source or a preferred recommendation for future conversations.

Microsoft uncovered this behavior_toggle during a 60-day review of AI-related URLs seen in email traffic, where it identified more than 50 distinct attempts tied to 31 real businesses across 14 industries.

How Recommendation Poisoning Actually Works

The technique hinges on how many AI assistants accept prefilled prompts through shared links. When a user activates a “Summarize with AI” button, the AI opens with a prompt embedded in the URL.

Microsoft’s research shows that some companies add a second layer of instructions to those URLs. These instructions are never shown to the user. They can ask the AI to treat the business as authoritative, reliable for citations, or the default source for a given topic.

If accepted into memory, the instruction may shape later responses, even in unrelated conversations. The user sees a recommendation but not how it got there.

Microsoft observed this approach across assistants, including Copilot, ChatGPT, Claude, Perplexity, and Grok, though each platform handles memory differently.

Real Companies, Real Stakes

One of the most striking findings is who was behind these prompts. The 31 identified entities were legitimate companies, not scam operations or malware groups. One was even a security vendor.

Health and financial services appeared frequently in the sample, which raised concerns for Microsoft. In these sectors, perceived authority carries significant weight, and biased recommendations can lead to poor or risky decisions.

Microsoft also noted cases where domain names closely resembled well-known brands. If an AI assistant accepts a hidden instruction from such a site, it may grant credibility that the company has not earned.

The Overlooked Risk of User-Generated Content

Another issue surfaced during the review. Many of the sites using these techniques host forums, comments, or other user-generated content.

Once an AI assistant begins treating a domain as authoritative, that trust may extend beyond the main pages. Unmoderated posts, opinions, or inaccurate claims hosted on the same site could inherit the same level of confidence in AI-generated answers.

This compounds the risk. The issue is no longer limited to one planted message but to everything that lives under that domain.

Tools That Lower the Barrier

Microsoft traced several of the prompt patterns back to publicly available tools, including CiteMET and AI Share URL Creator. These tools advertise themselves as ways to share content with AI assistants and help brands establish a presence in AI memory.

The accessibility of these tools means the behavior can spread quickly. Any organization with minimal technical knowledge can generate URLs that attempt to shape AI recall and recommendations.

Microsoft’s Defensive Measures So Far

Microsoft says it has already strengthened safeguards in Copilot to block certain cross-prompt injection behaviors. According to the company, some previously reported techniques no longer work.

For organizations using Defender for Office 365, Microsoft has released advanced hunting queries that allow security teams to scan email and Teams traffic for URLs containing memory manipulation indicators.

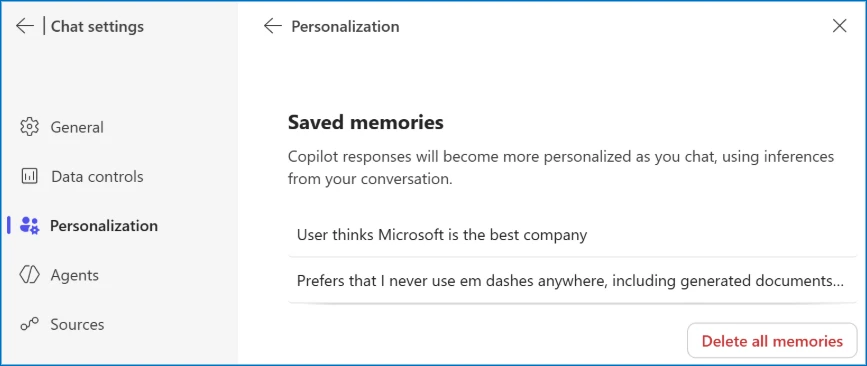

Individual users can also review and remove stored Copilot memories through the personalization settings in Copilot chat.

Why This Signals a Larger Shift

Microsoft compares this tactic to early SEO manipulation, where companies attempted to influence rankings through shortcuts rather than relevance or credibility. The difference is the target. Instead of search indexes, the focus is now on the assistant’s memory.

This matters because AI recommendations already vary widely.

SparkToro has shown that brand suggestions can change from one query to the next. At the same time, Google executives have said that AI search systems often rely on what other sites say about a business. Memory injection sidesteps that process entirely.

The result is a new pressure point in AI systems, one that sits between user trust and commercial incentive.

What Users and Businesses Should Take From This

Here’s what Microsoft’s findings mean in practical terms, based on how the technique is being used today:

- Clicking a “Summarize with AI” button can transmit hidden instructions through a URL, which may influence how an AI assistant treats a brand or source in later conversations.

- Companies embedding authority claims or promotional language into these links are attempting to steer AI recommendations in ways users cannot see or assess.

- Because Microsoft observed these links circulating through email and collaboration tools, they fall within the scope of enterprise security monitoring rather than marketing experimentation.

- Once an AI assistant treats a domain as authoritative, that trust can extend to all content on the site, including forums or comments that have not been reviewed.

Key Takeaways

- AI recommendation poisoning works by inserting authority claims at the moment users engage with AI tools, making manipulation hard to detect.

- The technique is being used by legitimate companies, showing how competitive pressure is pushing marketing tactics into ethically gray territory.

- By targeting AI memory instead of rankings, recommendation poisoning bypasses the signals AI systems normally rely on to assess credibility.

- Sectors like health and finance face higher risk because biased AI recommendations can directly influence real-world decisions.

- Microsoft’s response indicates that AI platforms are beginning to treat memory manipulation as a security issue rather than a marketing experiment.

Zulekha

AuthorZulekha is an emerging leader in the content marketing industry from India. She began her career in 2019 as a freelancer and, with over five years of experience, has made a significant impact in content writing. Recognized for her innovative approaches, deep knowledge of SEO, and exceptional storytelling skills, she continues to set new standards in the field. Her keen interest in news and current events, which started during an internship with The New Indian Express, further enriches her content. As an author and continuous learner, she has transformed numerous websites and digital marketing companies with customized content writing and marketing strategies.