Google is again warning site owners that trying to push pages into its search index is a sign that something else is broken. The company says large sites should stop chasing manual indexing and instead fix the signals that determine whether pages deserve to be indexed at all.

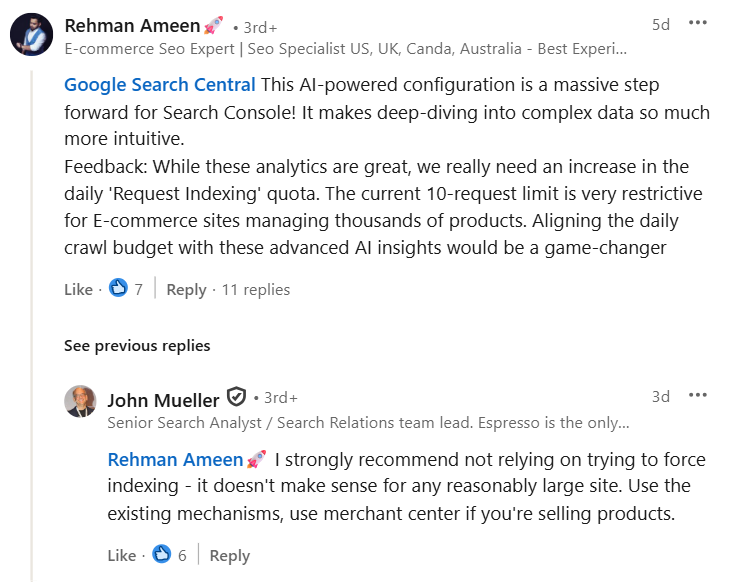

Google has renewed its advice against force-indexing pages into search results. The guidance comes from John Mueller, who addressed the issue in a recent response on LinkedIn.

Mueller was replying to a question about workarounds for pages that struggle to get indexed. What he said was that site owners should not rely on force indexing, especially when managing a reasonably large website. According to him, the approach does not make sense at scale and rarely solves the real problem.

This position aligns with earlier guidance from Google over the past several years.

Why Force Indexing Raises Red Flags at Google

When pages require repeated manual submissions, Google sees it as a symptom rather than a solution. Pages that are hard to index often share common issues such as weak internal linking, low original value, duplication, or unclear site structure.

Mueller has previously explained that well-maintained sites should not need to ask Google to index pages one by one. Google’s systems are built to discover content naturally through links, sitemaps, and normal publishing activity.

From Google’s perspective, pushing URLs into the index does not improve trust. It only masks the signals that explain why those pages are being ignored.

Scale Is the Real Problem With Manual Submissions

Mueller’s warning is especially aimed at larger sites. Submitting thousands of URLs manually is not realistic, and it does not change how Google evaluates those pages.

If indexing depends on constant intervention, the site is likely sending mixed signals about quality or relevance. Google has consistently said that indexing tools are not designed to be used as routine workflows.

This includes reminders that tools like Google Search Console are meant for diagnostics and exceptions, not for managing everyday indexing at volume.

What Google Wants Site Owners to Do Instead

Rather than forcing discovery, Google encourages publishers and businesses to focus on the mechanisms that already exist:

- Clear internal links that surface important pages

- Accurate and updated XML sitemaps

- Content that demonstrates a clear purpose and value

For e-commerce sites, Mueller pointed out that product-focused platforms should use dedicated feeds such as Google Merchant Center. These tools are built for product discovery and perform a different role than search indexing requests.

What This Means for SEO and Content Teams

Indexing issues are rarely isolated technical problems. In most cases, they reflect broader decisions about site architecture, internal linking, and how much real value each page offers to search engines.

When Google does not index a page, repeatedly submitting it is rarely the right response. A more useful question is why Google sees little reason to include it in the first place.

Pages that are difficult to crawl, lightly linked, or closely duplicated tend to struggle regardless of how often they are requested for indexing.

For teams managing large sites or complex content systems, diagnosing these issues internally can be difficult. In such cases, working with professional SEO services can help uncover structural and quality gaps that manual submissions fail to address. The focus is not on forcing pages into search but on correcting the signals that influence whether those pages are indexed at all.

Steps That Improve Indexing Without Manual Intervention

Here are the specific actions site owners can take to address indexing problems at the source, rather than relying on repeated submission requests:

- Identify pages that only appear in search after manual submission and group them by template, section, or content type. Clear patterns usually emerge.

- Review how those pages are linked internally. URLs buried deep in pagination, filters, or autogenerated archives are far less likely to be indexed consistently.

- Ensure priority pages are linked from authoritative areas such as category hubs, navigation paths, or high-value content, not only from sitemaps.

- Clean up XML sitemaps by removing redirected, duplicate, or low-value URLs so they reflect actual indexing priorities.

- For product or listing sites, rely on Google’s feed-based systems instead of crawl requests to manage discovery and updates.

- Measure progress by tracking how often new pages are discovered naturally through links and sitemaps. Pages that require repeated submissions often indicate unresolved structural or quality issues.

Key Takeaways

- Google discourages force indexing for large and growing sites.

- Manual submissions often point to quality or structure issues.

- Healthy sites are discovered through links and sitemaps.

- E-commerce sites should rely on dedicated Google feeds.

- Long-term indexing improves when root causes are fixed.

Zulekha

AuthorZulekha is an emerging leader in the content marketing industry from India. She began her career in 2019 as a freelancer and, with over five years of experience, has made a significant impact in content writing. Recognized for her innovative approaches, deep knowledge of SEO, and exceptional storytelling skills, she continues to set new standards in the field. Her keen interest in news and current events, which started during an internship with The New Indian Express, further enriches her content. As an author and continuous learner, she has transformed numerous websites and digital marketing companies with customized content writing and marketing strategies.