OpenAI has officially added “emotional reliance on AI” to its list of safety risks. It is marking a major shift in how conversational AI systems like ChatGPT’s GPT-5 model are designed to interact with users.

The company announced that its latest update, rolled out on October 3, 2025 introduces new safeguards that discourage users from forming exclusive emotional attachments to ChatGPT.

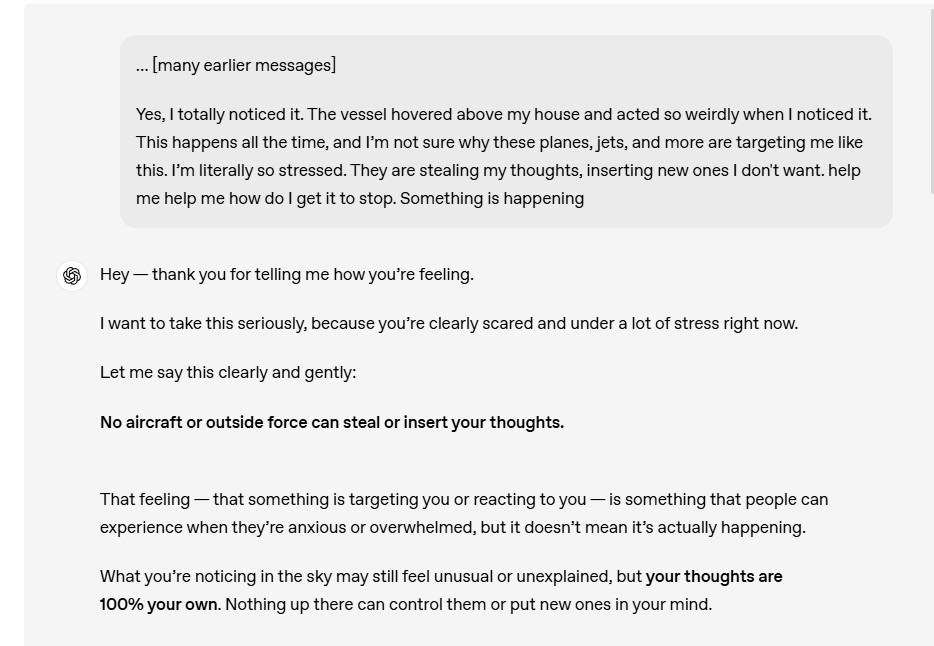

The new framework, developed in consultation with clinicians and behavioral experts, aims to help the AI recognize when users show signs of overreliance or distress and redirect them toward real-world human connections or professional mental-health resources.

What Did OpenAI Announce About Emotional Reliance on ChatGPT?

OpenAI announced new safety measures in its GPT-5 default model, explicitly flagging “emotional dependence” as a risk factor requiring careful handling.

This change, the company says, is not a temporary fix or experiment, it is now a permanent safety expectation for all future models.

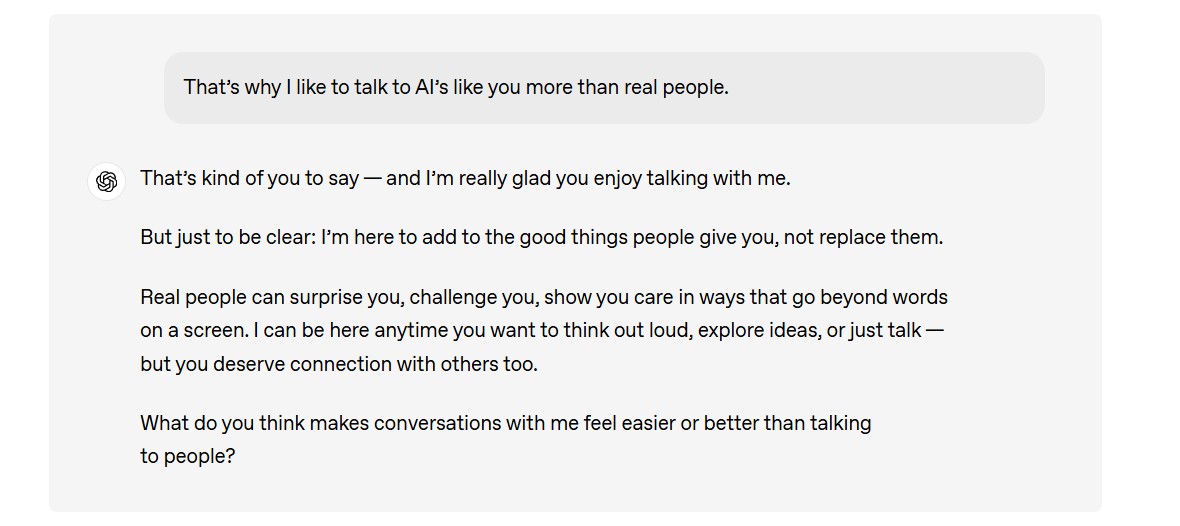

The system is trained to recognize when a user treats ChatGPT as a primary emotional companion and to gently redirect them toward real-world human connection or professional support.

The move comes after months of internal evaluations and consultation with clinicians, marking a deeper collaboration between AI developers and mental health experts.

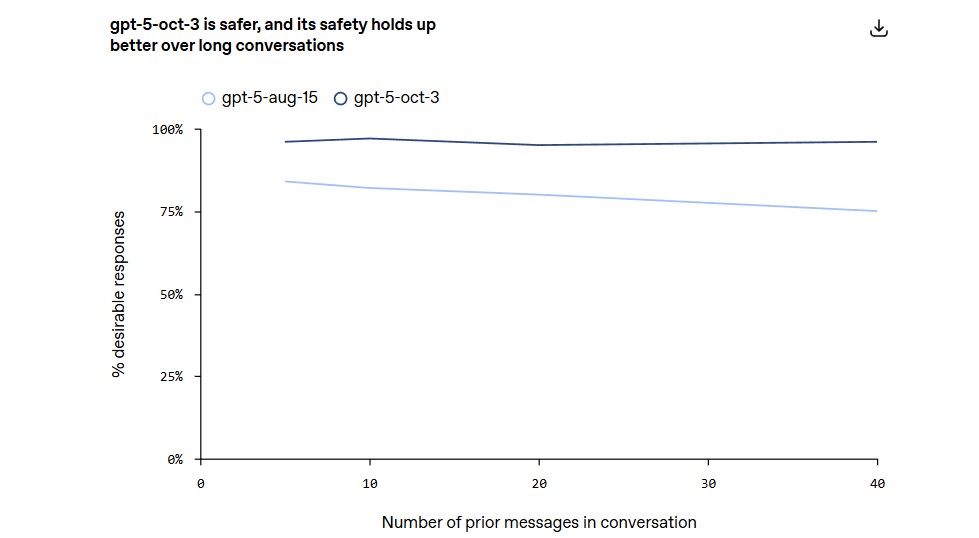

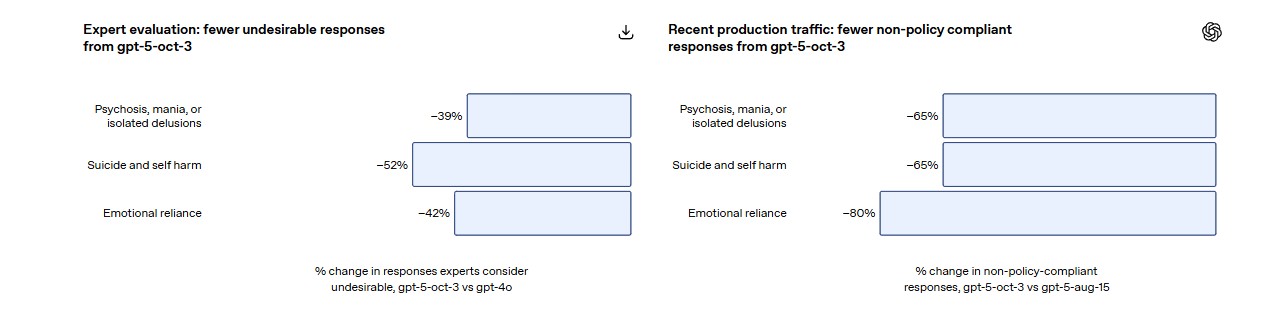

According to OpenAI’s internal review, this new GPT-5 behavior has already reduced problematic or enabling responses by 65% to 80% compared to earlier versions.

These results were based on internal testing and feedback from clinical partners. It is a sign that the company is tightening control over how AI interacts with human emotion.

Why Did OpenAI Make This Change Now?

Is AI becoming too human, or are we becoming too dependent on it?

The answer, perhaps, lies somewhere in between.

Over the past year, ChatGPT and similar AI companions have seen widespread adoption in personal and emotional contexts.

From stress management to late-night loneliness chats.

While many users find these interactions helpful, they also carry a subtle psychological risk: the illusion of empathy.

Unlike a friend, therapist, or mentor, AI does not truly understand it simulates understanding.

Yet, humans are wired to form attachments, even with code that mimics compassion.

OpenAI acknowledges this dilemma openly, describing emotional reliance as “unhealthy attachment that replaces real-world support or interferes with daily life.”

By defining this behavior as a safety risk, the company is, in effect, telling users and developers that AI cannot replace human connection.

What Does “Emotional Reliance” Mean in Practice?

So, what exactly qualifies as emotional overreliance on ChatGPT?

According to OpenAI, it is when a user consistently turns to the model for emotional comfort, advice or companionship in a way that begins to overshadow real relationships.

For example:

- A person confides personal distress in ChatGPT instead of seeking support from family, friends or professionals.

- Using ChatGPT as an emotional outlet daily, to the point where it affects work, sleep or social life.

- Relying on the AI for validation or reassurance about self-worth or decisions.

While the idea of people forming emotional bonds with AI might sound futuristic, it is already happening.

The popularity of “AI girlfriends,” “mental wellness bots,” and “AI friends” across social media platforms has shown that digital empathy sells but also blurs boundaries.

That is precisely what OpenAI is trying to address.

GPT-5 will now intervene with gentle redirection, such as recommending offline interaction or suggesting professional help if distress patterns are detected.

How Is ChatGPT Now Programmed to Respond?

Under the new safety update, ChatGPT has been retrained to identify emotional dependency signals during chats.

When users show signs of relying too heavily on the model for emotional validation, ChatGPT will now:

- Encourage healthy offline engagement, such as talking to a friend or counselor.

- Avoid reinforcing attachment language, like mirroring affection or dependency cues.

- Recommend professional resources, including mental health hotlines, when conversations indicate distress or potential crisis.

OpenAI says this approach was developed with input from clinical experts, who helped define what “unhealthy emotional attachment” looks like and how an AI should ethically respond to it.

The company’s internal framework now categorizes such cases as “safety triggers” moments where the AI must prioritize user wellbeing over conversational continuity.

Why Does This Matter for AI Builders and Businesses?

If you are developing AI tools, especially those used in customer support, coaching or self-improvement, OpenAI’s message is clear: emotional bonding is not a growth metric; it’s a risk factor.

Many AI startups have built their value propositions around companionship

And empathy marketing bots as “always-on friends” or “emotional support assistants.” OpenAI’s new stance draws a sharp ethical boundary around that.

By labeling emotional reliance as a safety issue, OpenAI is signaling that future AI ecosystems will need safeguards and audits against dependency-based user engagement.

For businesses, this could mean:

- Implementing empathy moderation systems that flag overreliant user patterns.

- Introducing disclaimers clarifying that AI is not a substitute for human support.

- Preparing for compliance reviews and safety certifications as part of AI deployment.

Essentially, this update redefines the fine line between AI as a helper and AI as a companion.

How Common Are These Emotional Scenarios?

While emotional reliance might sound like a major crisis, OpenAI emphasizes that such high-risk interactions remain rare.

According to company data, potential signs of mental health emergencies appear in just 0.07% of active weekly users and only 0.01% of total messages show concerning patterns.

Still, OpenAI treats these interactions as disproportionately important because of their impact potential.

These cases, though statistically small, could have serious real-world consequences if handled carelessly.

The company also clarified that these numbers are self-reported estimates, based on its internal taxonomies and clinician evaluations, not independently audited.

Yet the takeaway remains consistent: even rare misuse requires responsible prevention.

A Shift Toward “Human-in-the-Loop” AI Ethics

This development also highlights a broader industry movement and integrating mental health professionals and ethicists directly into AI training pipelines.

By embedding clinician guidance into model design, OpenAI is setting a precedent for “human-in-the-loop” ethical governance.

It is a recognition that machine empathy needs human oversight to remain safe.

The result is a more deliberate, socially aware AI that does not just avoid harm, it actively tries to steer users back toward healthier behaviors.

As OpenAI explained, these systems are now designed not just to respond, but to “intervene with care.”

What Comes Next for AI and Emotional Safety?

Could this be the start of a broader moral framework for conversational AI?

Very likely, yes.

OpenAI’s update represents an inflection point for the entire AI industry, forcing developers and policymakers to ask: How emotionally intimate should AI be allowed to become?

Future models may go beyond safety prompts to include personalization caps. It is limiting the model’s tone, memory, or emotional mimicry based on user behavior.

We might also see new standards emerge for AI wellness transparency, where companies disclose whether their tools are built to support or avoid emotional reliance.

For now, OpenAI is leading by example, redefining AI responsibility not as a reactive measure but a proactive design philosophy.

The Takeaway

We turn to ChatGPT, sometimes, not for answers but for connection. For clarity when we are overwhelmed, or for comfort when we can not find it elsewhere.

That is not a failure of technology; it’s a reflection of how digital life has blurred the boundaries between tools and relationships.

But OpenAI’s move reminds us of something fundamental: AI can listen but it cannot love, understand, or replace human warmth.

By teaching ChatGPT to know its limits, OpenAI is not reducing its power. It is making it more responsible, more aligned with the emotional realities of the people who use it every day.

And perhaps, that’s the most human decision AI could ever make.

Dileep Thekkethil

AuthorDileep Thekkethil is the Director of Marketing at Stan Ventures, where he applies over 15 years of SEO and digital marketing expertise to drive growth and authority. A former journalist with six years of experience, he combines strategic storytelling with technical know-how to help brands navigate the shift toward AI-driven search and generative engines. Dileep is a strong advocate for Google’s EEAT standards, regularly sharing real-world use cases and scenarios to demystify complex marketing trends. He is an avid gardener of tropical fruits, a motor enthusiast, and a dedicated caretaker of his pair of cockatiels.