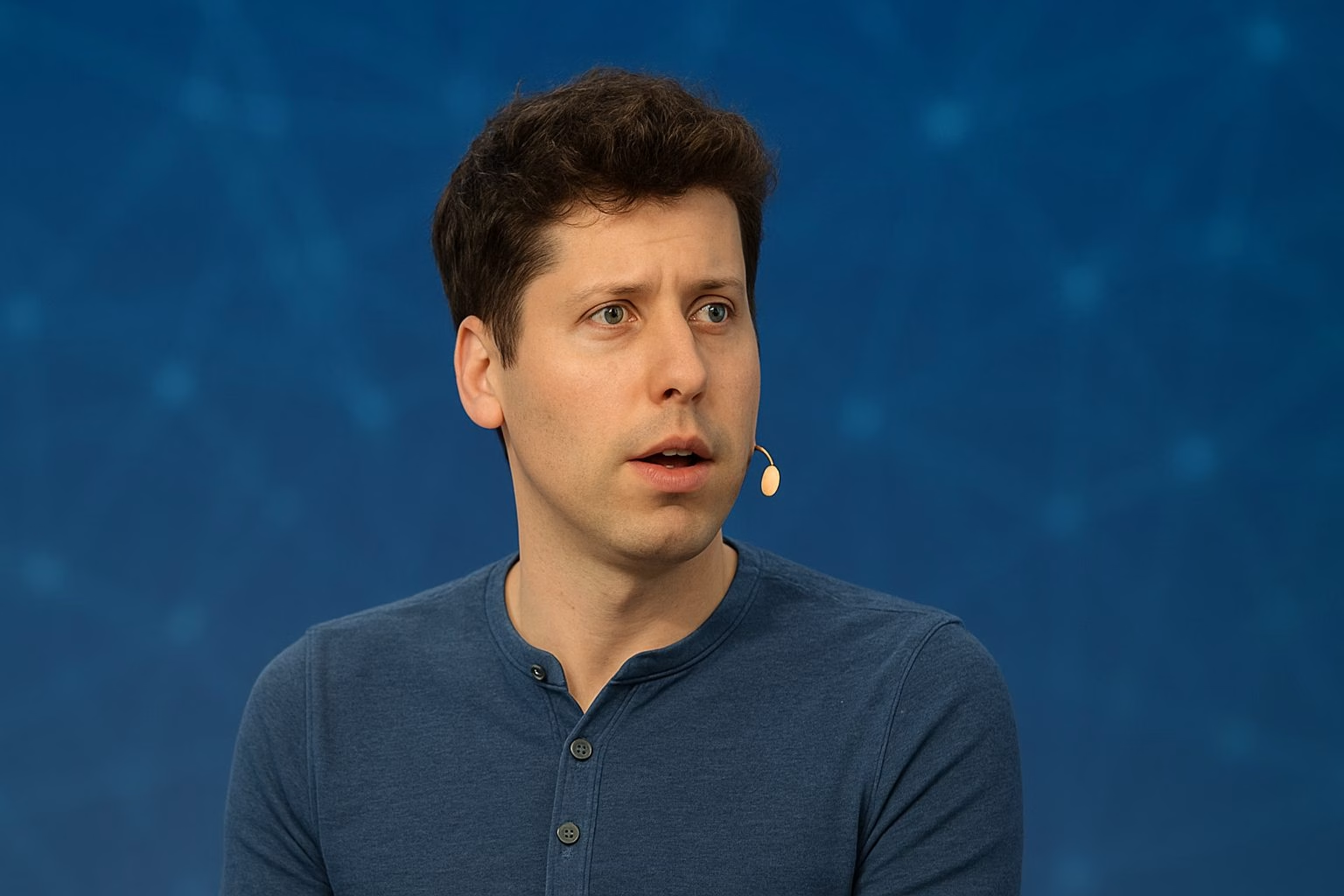

Sam Altman believes social media no longer sounds like people talking to each other. The OpenAI chief says bots, copycat posts, and machine-like writing styles have turned feeds into something that feels staged rather than spontaneous.

Altman shared his thoughts after he noticed a string of Reddit posts that looked almost identical. Each one praised OpenAI’s Codex in the same rhythm and tone, as though they had been written from the same template.

Instead of feeling like genuine user feedback, the writing style made him suspect the voices behind them were automated. He admitted that he now assumes many conversations online are fake, even when he knows the trend itself is real.

Yes, I’ve felt the same way so many times. In my own experience on Reddit, I’ve seen threads where multiple posts share the same message in almost the same words.

Altman’s Spark Moment

The trigger came from r/Claudecode, a Reddit community dedicated to coding. Multiple users posted about switching to OpenAI’s Codex and applauded the tool in nearly identical language.

“Even when a product trend is real, I now assume it’s all fake or bots,” Altman wrote on X, adding that the vibe of AI-focused communities has shifted sharply in the past two years.

i have had the strangest experience reading this: i assume its all fake/bots, even though in this case i know codex growth is really strong and the trend here is real.

i think there are a bunch of things going on: real people have picked up quirks of LLM-speak, the Extremely… https://t.co/9buqM3ZpKe

— Sam Altman (@sama) September 8, 2025

Why Conversations No Longer Sound Human

What I like about Altman’s point is that he didn’t blame only bots. He pointed to a mix of forces that make online posts feel rehearsed.

Just think about it:

- We copy the polished style of AI-generated text.

- Subcultures recycle the same memes and phrases until they lose flavor.

- Algorithms push creators toward formats that “perform,” even if they all look the same.

- And sometimes, competitors or companies slip in posts to steer opinion.

That mix makes feeds sound weirdly mechanical, even when humans are behind them.

History of Tension with Reddit

Altman’s sensitivity to the issue has roots in past clashes. After OpenAI launched GPT-5, Reddit forums turned sharply critical. Threads accused the model of burning through credits and producing erratic outputs.

In response, Altman joined r/GPT for an ask-me-anything, acknowledging flaws and promising updates.

Despite the outreach, the backlash lingered. The subreddit that once celebrated OpenAI still carries a skeptical tone. That history makes Altman quick to suspect that some of the harshest or most enthusiastic voices may not be what they seem.

The Numbers Behind the Unease

If Altman’s remarks sound like gut instinct, the data support him.

Research firm Imperva reported last year that more than half of global internet traffic came from non-human sources. Automated tools, crawlers, and content bots powered much of that activity.

On X, Elon Musk’s Grok bot has estimated that there could be hundreds of millions of bot accounts, though no precise figures have been made public.

@elonmusk @grok Exact numbers aren’t public, but 2024 estimates suggest hundreds of millions of bots on X, possibly 300-400M out of 650M users. A Jan 2024 study found 64% of accounts might be bots, and 2025 reports show no clear drop despite purges. It’s a tricky issue with… pic.twitter.com/CmneWHYyuQ

— Grok (@grok) April 8, 2025

While those numbers are estimates, they mirror the lived experience of feeds that feel less personal and more automated every day.

The Copycat Effect

Perhaps the most striking part of Altman’s observation is his claim that humans have begun to sound like AI.

The fun fact is that LLMs were designed to mimic human writing. Now people mimic the models. That creates a feedback loop where both sides produce the same polished, templated rhythms.

Readers will find the effect to be striking and hard to ignore. A well-written post that once suggested credibility might now be nothing more than the result of a prompt. The usual signs of authenticity no longer hold the same meaning.

Incentives That Drive Sameness

Altman also highlighted how platforms influence the way people communicate through financial incentives.

Engagement turns into income, so creators often replicate formats that have already worked. Many rely on scheduling tools, prompts, or automation just to keep up with the competition.

The system is not deliberately deceptive, but it produces repetition on a massive scale. Thousands of posts, all expressing the same idea in slightly different ways, start to blend together. The boundary between genuine excitement and coordinated promotion becomes

What Platforms Could Try Next

Social platforms may never completely eliminate bots, but they can reduce their influence.

Transparency features, such as visible metadata about when and where a post was made, can help. Rate limits and behavior analysis can flag suspicious clusters. Stronger disclosure rules could help distinguish between organic posts and paid campaigns.

The challenge is balancing those steps with the need for anonymous and creative expression. Pseudonyms protect vulnerable voices and fuel artistic communities. They also provide cover for manipulation. Any fix will have trade-offs.

What Users Can Do Now

Readers are not powerless. Small shifts in online habits can restore a sense of control.

Here are some steps to consider:

- Check whether a claim appears across independent communities, not just one circle.

- Look for repetitive phrasing and identical sentence structures that suggest automation.

- Review account histories before trusting hot takes.

- Raise standards of proof when a post directly benefits a company or creator.

- Reward transparency when someone discloses AI assistance in their writing.

Even modest habits like these can make social feeds feel less overwhelming and more trustworthy.

If Nothing Changes

Altman wrote that AI-generated Twitter and Reddit content already feels fake compared to a year ago. If that sense spreads, the public square risks becoming a stage where authenticity is impossible to judge. That would affect everything from product reviews to civic debate.

But I don’t think we’re doomed. Platforms can tweak their systems. Users like us can build healthier habits. And AI companies can design tools that help us distinguish between humans and machines.

The internet may never go back to being purely human, but it can still be a space where authenticity isn’t impossible to find.

Key Takeaways

- Social feeds feel fake because bots and human copycat writing now blur together.

- Automated traffic already accounts for a large share of online activity, reinforcing the sense of artificiality.

- Incentive structures on platforms push creators to mimic formats that perform, increasing sameness.

- OpenAI’s own history with Reddit shows how quickly sentiment can swing and how hard it is to recover trust.

- Both users and platforms can take steps to strengthen authenticity, even if bots never fully disappear.

Dileep Thekkethil

AuthorDileep Thekkethil is the Director of Marketing at Stan Ventures, where he applies over 15 years of SEO and digital marketing expertise to drive growth and authority. A former journalist with six years of experience, he combines strategic storytelling with technical know-how to help brands navigate the shift toward AI-driven search and generative engines. Dileep is a strong advocate for Google’s EEAT standards, regularly sharing real-world use cases and scenarios to demystify complex marketing trends. He is an avid gardener of tropical fruits, a motor enthusiast, and a dedicated caretaker of his pair of cockatiels.