Introduction to Google BERT Update

All the talk in the SEO arena is about the recently announced Google BERT update. Like the other Google Algorithm updates, there is a fair bit of confusion among SEOs about the impact of what Google calls the biggest change in its algorithm in the last five years.

The official announcement of Google says the BERT (Bidirectional Encoder Representations from Transformers) algorithm is a neural network-based technique for understanding natural language. Sounds confusing, right? But the concept is pretty simple.

What is BERT NLP Model?

BERT is an open-source pre-trained Natural Language Processing (NLP) Model developed by Google that is capable of interpreting the intent and context of the search query based on the relation of the entire search phrase. BERT model tries to understand the context of a word based on all of the other surrounding words, making the search results of conversational queries more accurate.

Here is an example of the BERT Model:

Above all, BERT joins Google’s elite club of Machine Learning Algorithms that learn as they encounter new information.

The language understanding capabilities of BERT will give users better results that satiate their real intent.

Since the user’s intent is more based on the personal interest that is usually fed in as complex or conversational queries, it’s hard for search engines like Google to interpret the desired intent.

According to Google, the reason for people using the complex query string is due to the fear that search engines may not understand the otherwise conversational queries. However, with the Google BERT Update, the search engine giant will try to bridge this specific gap.

Google BERT will use Bi-direction Language Processing instead of the traditional left to right and right to left language processing models. Unlike the shallow bi-directional language processing that goes left-to-right and a right-to-left, BERT uses a more sophisticated masked language model tries to understand the relationship of each word against the other.

Google has now integrated BERT to it’s Natural Language Processing to provide the best contextual representation of each word, not just the left or right context.

Watch this Meetup video by Danny Luo explaining the technical aspects of Google BERT

BERT Algorithm: Impact on Voice Search

Voice Search is undoubtedly the next big thing in the SEO world and the Internet. Google has been pushing voice assistant focused features over the last few years, and BERT can be considered as the latest one.

The announcement says that the BERT Algorithm Update is the fruit of years of hard work put in by the language research team under its machine learning department. Google says BERT marks a milestone in search as it’s the biggest leap the company has made in its entire history.

With the advent of voice-based searches and personal assistance devices, such as Google Home, becoming part of everyone’s family, there is a significant need for better contextual search results.

The Origin of BERT Algorithm

The origin of the BERT Algorithm can be traced back to 2017 when the Google AI team started working on project Transformers. The Transformer language understanding model developed by Google was based on the concept of novel neural network architecture.

The concept of novel neural network architecture tries to process words in relation to the other words in a sentence rather than one-by-one.

The encoding concept of Transformers has been used to formulate BERT model, which tries to understand the context of a word in relation to the words that precede and succeed it.

This helps Google better understand the intent of the search query and provide a search result that fulfills the intent of the user.

The search engine giant has also confirmed that the new search algorithm update is not limited to changes in software but to the whole infrastructure as well.

To further push the limits of the search, Google has introduced Cloud TPUs to provide more contextual information quickly.

The traditional hardware may limit the performance of BERT as there are new processes involved that require advanced technology.

Cloud TPUs, aka Tensor Processing Units, are custom-made applications of Google that can help in accentuating the performance of the machine learning algorithms.

Impact of Google BERT Algorithm on Overall Search Results

Starting last week, Google has been using the BERT Algorithm to display search results. It has been confirmed that the new algorithm has impacted 1 out of 10 search queries entered on Google search.

Massive changes are expected for search queries that are longer and conversational. SEOs have long been avoiding the importance of prepositions and articles while optimizing pages as they were never part of the keyword strategy.

However, with the new BERT update, SEOs now have to put in a lot of thought in this as a change in preposition could alter an entire search result.

With the BERT Algorithm now in place, Google search understands that a change in preposition matters a lot when it comes to fulfilling the search intent of the user. According to the official announcement, the final version of the BERT update was launched after thorough testing.

The announcement also puts the impetus on how BERT will impact Featured snippets. It has been learned that 99% of voice search-based results are the ones that appear on Featured Snippets. Google has stated that the BERT algorithm is trained to understand the most appropriate Featured Snippet results. This means a lot of changes may happen with the already existing Featured Snippets.

Did BERT Algorithm Update Affect Your Website?

If you see an organic traffic decline after October 20th, especially with regards to Featured Snippets and voice search, there is a high chance that you were hit by the BERT update. Since this update is more content-focused, there is very little chance that making technical improvements on the site can help in improving the rankings.

The focus here must be to improve the content quality based on the intent of the users. This makes Audience Mapping a focus area in SEO once again. The content created for a website must qualify for its target audience, and thus, the presentation of the content and its style will now play a significant role in SEO.

Updated on 29-10-2019

Impact of BERT Update

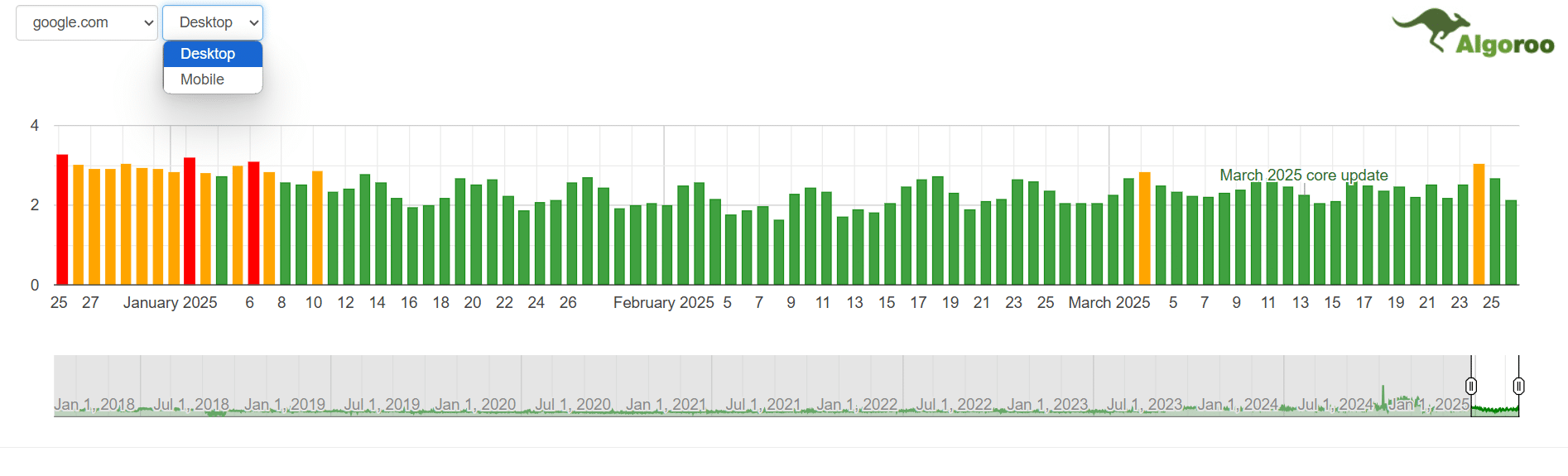

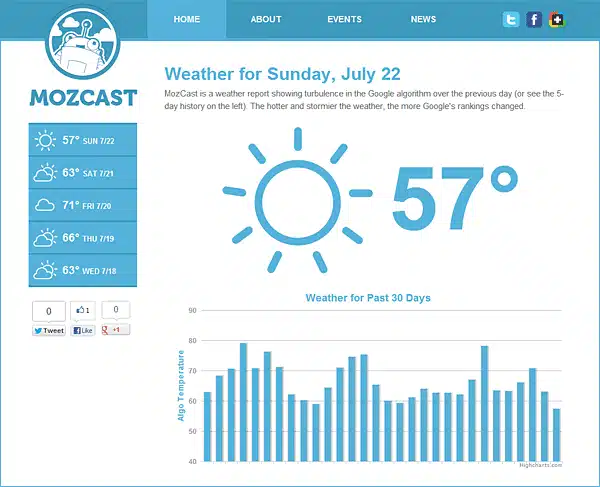

It’s been almost five days since Google launched its new Algorithm update christened the ‘BERT Update.’ However, the impact of the BERT Update is something that has baffled the SEO world as the shock that Google predicted never transpired or SEOs over-estimated the aftermath of BERT.

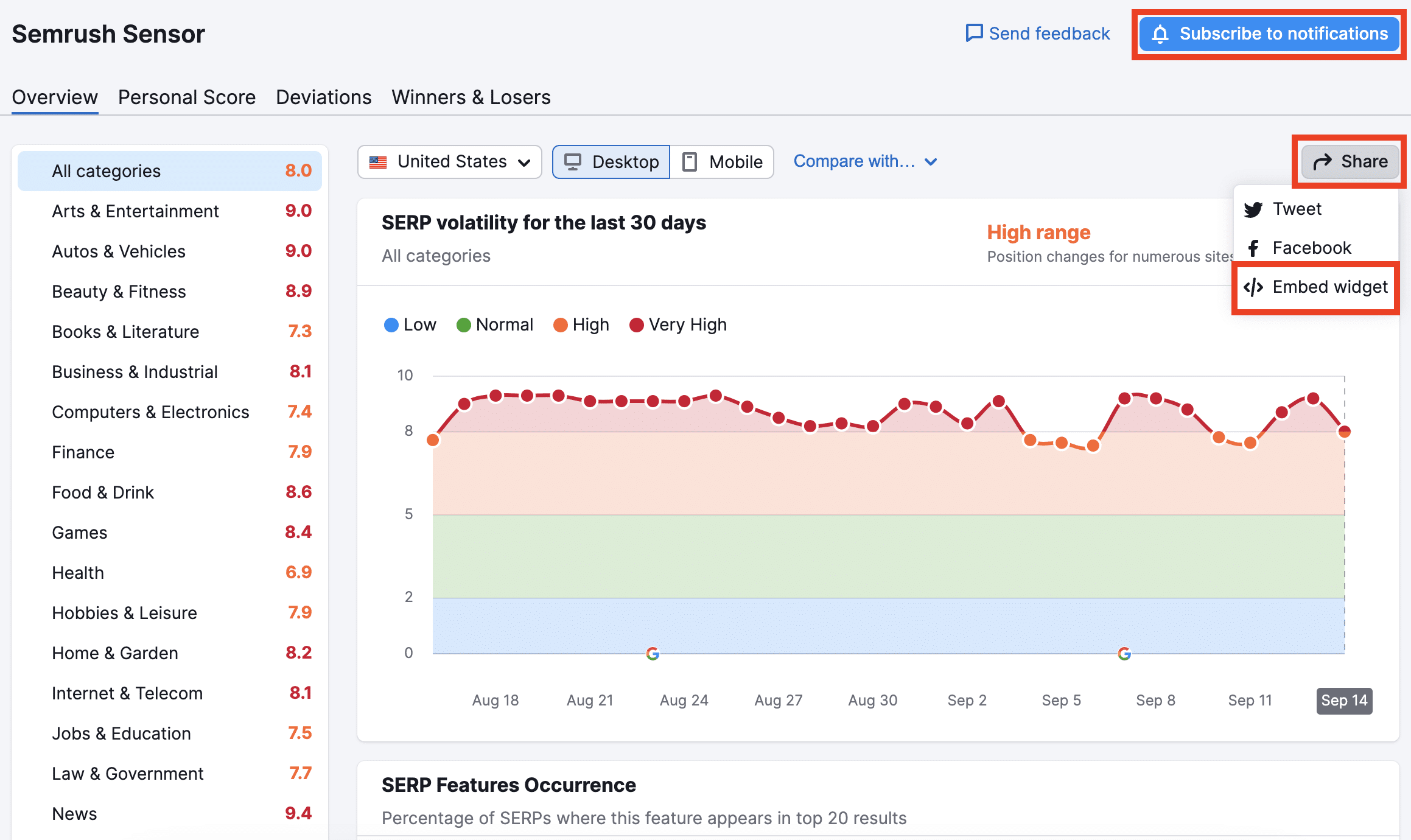

The announcement of the BERT Update was tagged with a huge statement from Google that said the new natural language processing algorithm will impact one out of ten search results. However, algorithm trackers failed to notice an alarming fluctuation in rankings, making SEOs ask several questions about the BERT Update and its course of action.

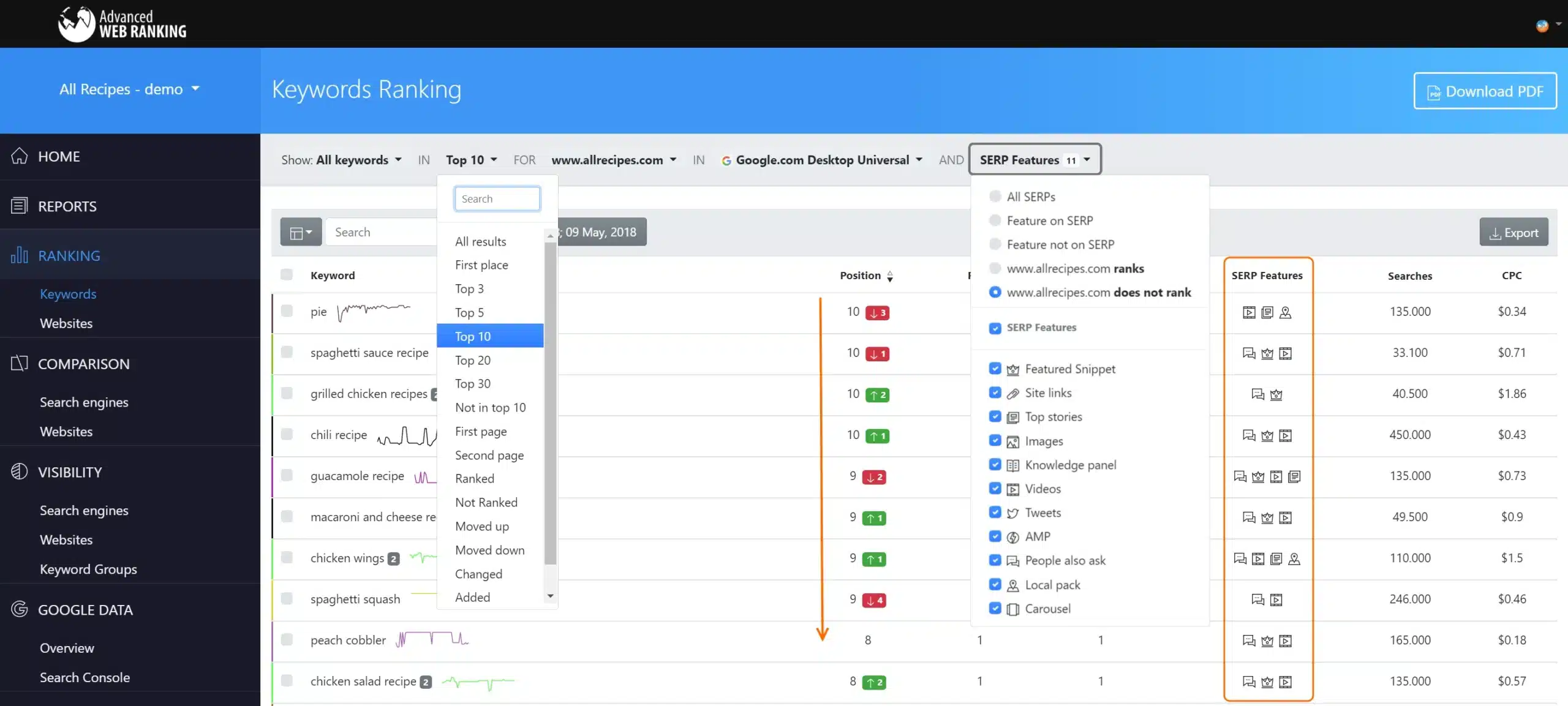

Data From Google Algorithm Trackers

However, this doesn’t mean that BERT has not made its impact. Most of the above trackers are focused on monitoring the changes in head keywords and shorter queries. On the flip side, BERT is more focused on long-tail queries that are conversational in nature.

New Opportunities with BERT Update

With the BERT update, the focus of your content is no longer limited to reaching the word target, but it’s more about providing clear information that’s valuable to your readers.

If you want to rank well for informational keywords, answer specific queries through your content in a better way than your competitors. From slides, videos, infographics, flow charts, and audios, do whatever it takes to answer user queries.

Instead of focusing on long-form content that may be interpreted by Google in different ways and show up in the SERP, focus more on long-tail keywords.

On a positive note, if your search traffic drops a little due to the BERT algorithm update, don’t fret. Think of it this way, if people are looking for “how to lose weight with intermittent fasting” and your article talks throughout about the disadvantages of intermittent fasting, then people are just going to stop midway or even sooner and hit the back button.

Yeah, you are losing a chunk of traffic that way but isn’t it better than increasing bounce rates that ruin your metrics? Additionally, this will give you an opportunity to create content that is super-specific.

The official announcement from Google had categorically stated this:

Particularly for longer, more conversational queries, or searches where prepositions like “for” and “to” matter a lot to the meaning, Search will be able to understand the context of the words in your query. You can search in a way that feels natural for you.

BERT Update Live for 70 Languages

Google officially announced in December that the BERT (Bidirectional Encoder Representations from Transformers) is now active for search results across 70 languages.

Google BERT Update, rolled out in October, is touted as the biggest update from Google in five years, is a far more advanced language processing algorithm that derived from the Google Transformers project.

The BERT Algorithm is trained to understand words in relation to all the other words in a query, rather than one-by-one in order. This gives more impetus to the intent and context of the search query and delivers results that the user seeks.

The Google SearchLiaison official tweet says, “BERT, our new way for Google Search to better understand language, is now rolling out to over 70 languages worldwide. It initially launched in Oct. for US English.”

BERT, our new way for Google Search to better understand language, is now rolling out to over 70 languages worldwide. It initially launched in Oct. for US English. You can read more about BERT below & a full list of languages is in this thread…. https://t.co/NuKVdg6HYM

— Google SearchLiaison (@searchliaison) December 9, 2019

Here is the list of languages that uses the BERT natural language processing algorithm to display Google search results:

Afrikaans, Albanian, Amharic, Arabic, Armenian, Azeri, Basque, Belarusian, Bulgarian, Catalan, Chinese (Simplified & Taiwan), Croatian, Czech, Danish, Dutch, English, Estonian, Farsi, Finnish, French, Galician, Georgian, German, Greek, Gujarati, Hebrew, Hindi, Hungarian, Icelandic, Indonesian, Italian, Japanese, Javanese, Kannada, Kazakh, Khmer, Korean, Kurdish, Kyrgyz, Lao, Latvian, Lithuanian, Macedonian Malay (Brunei Darussalam & Malaysia), Malayalam, Maltese, Marathi, Mongolian, Nepali, Norwegian, Polish, Portuguese, Punjabi, Romanian, Russian, Serbian, Sinhalese, Slovak, Slovenian, Swahili, Swedish, Tagalog, Tajik, Tamil, Telugu, Thai, Turkish, Ukrainian, Urdu, Uzbek, Vietnamese and Spanish.

Frequently Asked Questions About Google BERT Update

Does Google BERT Update impact languages other than US English?

As of now, BERT is used for general ranking US English. However, over the next few months, the support will be extended to more languages. That said, Google doesn’t have a clear timeline of the release of the BERT Update for other languages.

Will Google BERT Update impact Featured Snippets?

Yes. Google has confirmed that the BERT Update will make major changes in the way users will see featured snippets in 24 countries where the feature is now available. Users will see significant improvements in Featured Snippets that appear for languages like Korean, Hindi, and Portuguese.

How to optimize for Google BERT Update?

There is nothing to optimize for BERT. Google’s public face, Danny Sullivan has made it clear that SEOs have nothing to optimize for BERT. However, he reiterated that the crux of the update lies in writing quality content for the end-users.

https://twitter.com/dannysullivan/status/1188698321113759744

Google BERT was touted as a big update, but why is there less talk about it?

Google termed the BERT Update as “the biggest leap forward in the past five years, and one of the biggest leaps forward in the history of Search.” But there was less fluctuation felt by the SEOs – be it positive or negative impact from the update. BERT mostly affects conversational search queries rather than board search terms. Most of the keyword tracking tools and algorithm trackers use short-tailed and broad keywords for tracking purposes. This could be one of the reasons for the sedate reaction to the update.

Does Google use BERT to make sense of all searches?

BERT will enhance Google’s understanding of about 1 in 10 searches in the US in English. Particularly for conversational and longer queries, and searches where prepositions add meaning to a phrase, Search will be able to detect the context of the query. However, shorter queries like brand searches and using shorter phrases are two situations where BERT’s natural language processing may not be required.

What other Google products might BERT affect?

Although Google announced that the BERT update will be limited to Search only, there might be some effect on the Google Assistant as well.

Dileep Thekkethil

AuthorDileep Thekkethil is the Director of Marketing at Stan Ventures, where he applies over 15 years of SEO and digital marketing expertise to drive growth and authority. A former journalist with six years of experience, he combines strategic storytelling with technical know-how to help brands navigate the shift toward AI-driven search and generative engines. Dileep is a strong advocate for Google’s EEAT standards, regularly sharing real-world use cases and scenarios to demystify complex marketing trends. He is an avid gardener of tropical fruits, a motor enthusiast, and a dedicated caretaker of his pair of cockatiels.